“What’s the difference between crawling, displaying, indexing and ranking?”

Lily Ray recently shared that she asks this question to potential employees when hiring the Amsive Digital SEO team. Danny Sullivan from Google thinks it’s an excellent one.

As basic as it may seem, it is not uncommon for some practitioners to mix up the basic stages of seeking and completely mix up the process.

In this article, we get a refresher on how search engines work and discuss each stage of the process.

Contents

Why knowing the difference matters

I recently worked as an expert witness in a trademark infringement case where the counter-witness misunderstood the stages of the search.

Two small companies stated that they each had the right to use similar brand names.

The opposition party “expert” erroneously concluded that my client used inappropriate or hostile SEO to outrank the plaintiff’s website.

He also made several critical mistakes when describing Google’s processes in his expert report, claiming that:

An essential defense in lawsuits is to try to rule out the findings of an expert witness – which can happen if a person can demonstrate to the court that they do not have the basic qualifications necessary to be taken seriously.

Since their expert was clearly not qualified to testify on SEO matters, I presented his misrepresentations of Google’s process as evidence to support the claim that he was not properly qualified.

This may sound harsh, but this unqualified expert made many basic and apparent mistakes in presenting information to the court. He falsely presented my client as somehow engaging in unfair business practices through SEO while ignoring questionable behavior on the part of the plaintiff (who blatantly used black hat SEO when my client was not).

The counter-expert in my lawsuit is not alone in this misunderstanding of the search stages used by the leading search engines.

There are prominent search marketers who have also merged the stages of search engine processes, leading to misdiagnoses of underperformance in the SERPs.

I’ve heard someone say, “I think Google has penalized us, so we can’t be in search results!” – when in fact they had missed a key setting on their web servers that made their site’s content inaccessible to Google.

Automated penalties may have been categorized as part of the ranking phase. In reality, these websites had problems in the crawling and rendering phases that made indexing and ranking problematic.

If there are no notifications in the Google Search Console of a manual action, one should first focus on common issues in each of the four stages that determine how search works.

It’s not just semantics

Not everyone agreed with Ray and Sullivan’s emphasis on the importance of understanding the differences between crawling, rendering, indexing, and ranking.

I noticed that some practitioners view such concerns as mere semantics or unnecessary “gatekeeping” by elite SEOs.

To some extent, some SEO veterans have indeed mixed up the meanings of these terms very loosely. This can happen across all disciplines as those imbued with industry jargon with a shared understanding of what they mean. There is nothing wrong with that in itself.

We also tend to anthropomorphize search engines and their processes, because interpreting things by describing them as familiar features makes understanding easier. There’s nothing wrong with that either.

But this inaccuracy when talking about technical processes can be confusing and make it more challenging for those trying to learn more about the discipline of SEO.

One can use the terms casually and imprecisely, only to a degree or as shorthand in conversation. That said, it is always best to know and understand the precise definitions of the stages of search engine technology.

The 4 stages of search

There are many different processes involved in including content from the Internet in your search results. In some ways, it can be a gross simplification to say that there are only a handful of discrete phases to make it happen.

Each of the four phases I cover here has different sub-processes that can occur within it.

Even beyond that, there are important processes that can be asynchronous, such as:

What follows are the primary search stages required for web pages to appear in search results.

Crawling

Crawling takes place when a search engine requests web pages from the servers of websites.

Imagine Google and Microsoft Bing sitting in front of a computer typing or clicking a link to a webpage in their browser window.

For example, the engines of the search engines visit web pages that are similar to how you do. Every time the search engine visits a web page, it collects a copy of that page and notes all the links on that page. After the search engine collects that web page, it visits the next link in the list of links yet to be visited.

This is called “crawling” or “spidering”, which is apt because metaphorically the web is a giant, virtual web of interconnected links.

The data collection programs used by search engines are called “spiders”, “bots” or “crawlers”.

Google’s primary crawler is “Googlebot”, while Microsoft Bing has “Bingbot”. Each has different specialized bots for visiting ads (i.e. GoogleAdsBot and AdIdxBot), mobile pages, and more.

This stage of search engine processing of web pages seems simple, but there is a lot of complexity in what happens just at this stage.

Think about how many web server systems there might be, with different operating systems of different versions, along with different content management systems (i.e. WordPress, Wix, Squarespace), and then the unique customizations of each website.

Many problems can prevent search engine crawlers from crawling pages, which is a great reason to study the details at this stage.

First, the search engine has to find a link to the page at some point before it can query and visit the page. (Under certain configurations, the search engines are known to suspect that there could be other undisclosed links, such as stepping up the link hierarchy at the subdirectory level or through some limited internal website search forms.)

Search engines can discover the links of web pages in the following ways:

In some cases, a website will instruct the search engines not to crawl one or more web pages through the robots.txt file, which is located at the root level of the domain and web server.

Robots.txt files may contain multiple guidelines instructing search engines to prohibit the website from crawling specific pages, subdirectories, or the entire website.

Instructing search engines not to crawl a page or section of a website does not mean that those pages cannot appear in search results. Keeping them from being crawled in this way can seriously affect their ability to rank well for their keywords.

In still other cases, search engines may have trouble crawling a website if the site automatically blocks the bots. This can happen when the website’s systems have detected that:

However, search engine bots are programmed to automatically change delay rates between requests when they detect that the server is struggling to keep up with the query.

For larger websites and websites with frequently changing content on their pages, “crawl budget” can become a factor in whether search bots can crawl all pages.

Essentially, the web is something of an infinite space of web pages with different update rates. The search engines may not get around to visiting every single page, so they prioritize the pages they will crawl.

Websites with a large number of pages or that are slower to respond may use up their available crawl budget before all their pages are crawled if they have a relatively lower ranking weight compared to other websites.

It’s worth noting that search engines also request all the files needed to build the web page, such as images, CSS, and JavaScript.

As with the web page itself, if the additional resources that help build the web page are not accessible to the search engine, it can affect how the search engine interprets the web page.

Rendering

When the search engine crawls a web page, it will “render” the page. This means that the HTML, JavaScript, and cascading stylesheet (CSS) information is used to generate what the page will look like to desktop and/or mobile users.

This is important so that the search engine can understand how the content of the web page is displayed in context. By processing the JavaScript, they can ensure that they have all the content that a human user would see when visiting the page.

The search engines categorize the rendering step as a sub-process within the crawl phase. I’ve listed it here as a separate step in the process, because fetching a web page and then parsing the content to understand what it would look like compiled in a browser are two different processes.

Google uses the same rendering engine used by the Google Chrome browser, called “Rendertron”, which is built on the open-source Chromium browser system.

Bingbot uses Microsoft Edge as its engine to run JavaScript and display web pages. It’s also now built on the Chromium-based browser, so it essentially renders web pages in the same way as Googlebot.

Google stores copies of the pages in their repository in a compressed format. It seems likely that Microsoft Bing does this too (but I haven’t found any documentation confirming this). Some search engines may store a shortened version of web pages in terms of just the visible text, stripped of all formatting.

Rendering usually becomes a problem in SEO for pages that have important parts of the content that rely on JavaScript/AJAX.

Both Google and Microsoft Bing will run JavaScript to see all the content on the page, and more complex JavaScript constructs can be challenging for the search engines to work.

I’ve seen JavaScript constructed web pages that were essentially invisible to the search engines, resulting in very sub-optimal web pages that wouldn’t be able to rank for their search terms.

I’ve also seen cases where infinitely scrolling category pages on ecommerce websites didn’t perform well in search engines because the search engine couldn’t see as many links from the products.

Other conditions may also interfere with the display. For example, if there are one or more JaveScript or CSS files that are not accessible to the search engine bots because they are in subdirectories that are not allowed by robots.txt, it will be impossible to fully process the page.

Googlebot and Bingbot largely do not index pages that require cookies. Pages that conditionally provide some important elements based on cookies may also not be displayed completely or correctly.

Indexing

After a page is crawled and rendered, the search engines further process the page to determine whether it will be indexed or not, and to understand what the page is about.

The search engine index is functionally similar to an index of words found at the end of a book.

A book’s index contains all the key words and topics found in the book, with each word listed alphabetically, along with a list of the page numbers where the words/topics can be found.

A search engine index contains many keywords and keyword strings, linked to a list of all the web pages where the keywords are found.

The index bears some conceptual similarity to a database lookup table, which was originally the structure used by search engines. But the major search engines are now probably using something a few generations more sophisticated to accomplish the goal of looking up a keyword and returning all the URLs relevant to the word.

Using functionality to lookup all pages associated with a keyword is a time-saving architecture, as it would take extremely unworkable amounts of time to search all web pages for a keyword in real time, every time someone searches for it.

For various reasons, not all crawled pages are saved in the search index. For example, if a page contains a robots meta tag with a “noindex” statement, it instructs the search engine not to include the page in the index.

Likewise, a web page may contain an X-Robots-Tag in the HTTP header that instructs the search engines not to index the page.

In still other cases, a web page’s canonical tag may instruct a search engine that a page other than the current one should be considered the major version of the page, leading to other, non-canonical versions of the page being removed from the index.

Google has also stated that web pages should not be indexed if they are of low quality (pages with duplicate content, pages with thin content, and pages containing all or too much irrelevant content).

There is also a long history of suggesting that websites with insufficient collective PageRank may not have all of their web pages indexed – suggesting that larger websites with insufficient external links may not be indexed thoroughly.

Insufficient crawl budget can also cause a website to not have all its pages indexed.

An important part of SEO is diagnosing and correcting when pages are not being indexed. That’s why it’s a good idea to thoroughly study all the different issues that can get in the way of indexing web pages.

Ranking

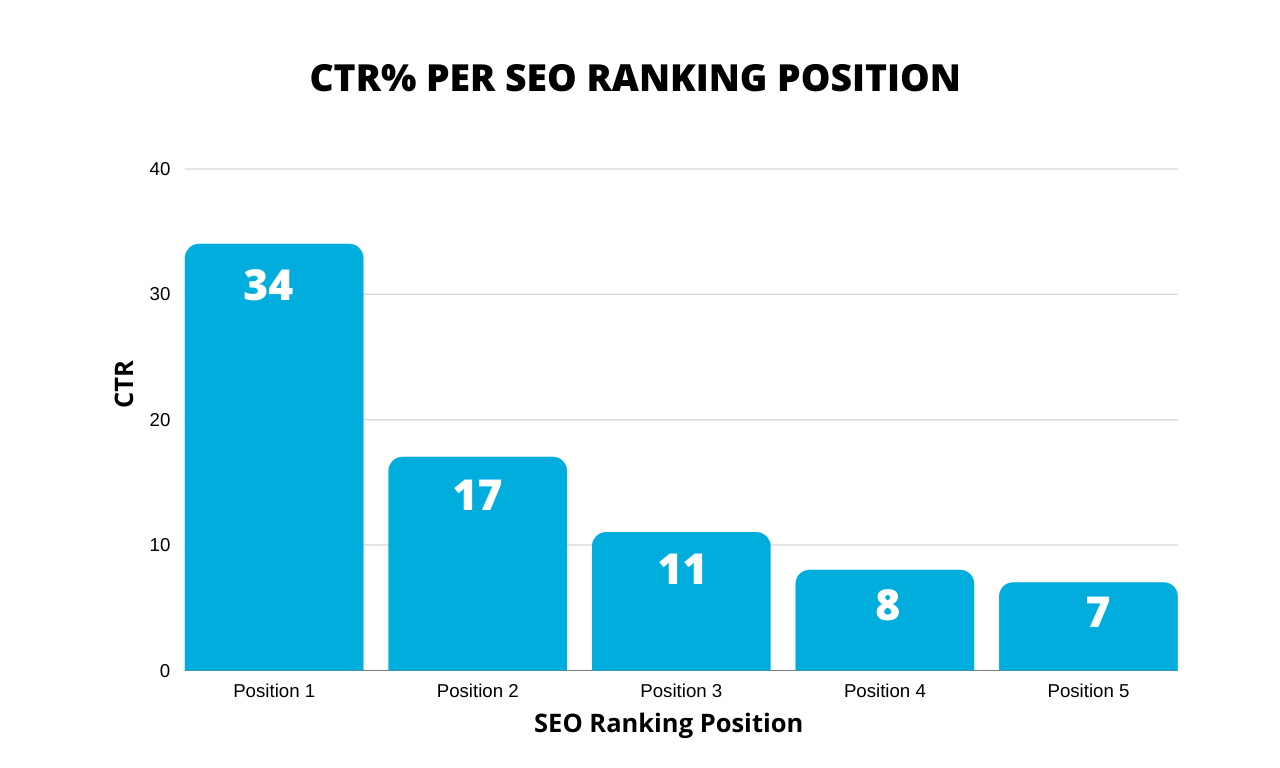

Web page ranking is probably the most targeted stage of search engine processing.

Once a search engine has a list of all the web pages associated with a particular keyword or keyword phrase, it must determine how those pages will rank when searching for that keyword.

If you work in the SEO industry, you are probably already quite familiar with some of what the ranking process involves. The search engine ranking process is also known as an “algorithm”.

The complexity associated with the ranking phase of search is so enormous that it alone deserves multiple articles and books to describe.

There are many criteria that can affect the ranking of a web page in search results. Google has said that over 200 ranking factors are used by its algorithm.

Within many of those factors, there can also be up to 50 “vectors” – things that can influence the impact of a single ranking signal on rankings.

PageRank is Google’s earliest version of its ranking algorithm, invented in 1996. It is based on a concept that links to a web page – and the relative importance of the sources of the links pointing to that web page – could be calculated to determine the relative position of the page. to all other pages.

A metaphor for this is that links are treated somewhat like votes, and pages with the most votes will win in a higher ranking than other pages with fewer links/votes.

Fast forward to 2022 and much of the old PageRank algorithm is still embedded in Google’s ranking algorithm. That link analysis algorithm also impacted many other search engines that developed similar methods.

The old Google algorithm method had to iteratively process the web’s links, passing the PageRank value dozens of times between pages before the ranking process was completed. This iterative calculation sequence over many millions of pages can take almost a month.

These days, new page links are introduced every day, and Google calculates rankings in a sort of drip method – allowing for pages and changes to be processed much faster without the need for a month-long link calculation process.

In addition, links are graded in a sophisticated way – withdrawing or downgrading paid links, traded links, spam links, unedited links, and more.

Broad categories of factors beyond links also influence rankings, including:

Conclusion

Understanding the key stages of search is an important item to become a professional in the SEO industry.

Some social media personalities think it’s “going too far” or “gatekeeping” not to hire a candidate just because they don’t know the differences between crawling, displaying, indexing, and ranking.

It is a good idea to know the differences between these processes. However, I wouldn’t consider a vague understanding of such terms a deal breaker.

SEO professionals come from different backgrounds and levels of experience. What is important is that they are trainable enough to learn and achieve a fundamental level of understanding.

The views expressed in this article are those of the guest author and not necessarily Search Engine Land. Staff authors are listed here.